For the past few years, the conversation around AI has focused on what’s visible. Bigger models, better benchmarks, faster gains. It’s an easy story to follow and an even easier one to sell. But that surface view misses where the real constraints are starting to show up. Not in the models themselves, but in the systems underneath them.

For a long time, I assumed China’s advantage in rare earths came down to luck. They had the deposits, the geology broke their way, and the rest of the world was playing catch-up.

That turns out to be almost entirely wrong.

In the 1980s, the United States was the global center of rare earth production. The Mountain Pass mine in California supplied much of the world. But more importantly, the U.S. didn’t just extract the material, it knew how to process it. Mining was only the first step. The real expertise lived in the chemistry, the separation, the ability to turn a messy mix of elements into something usable.

Rare earths aren’t rare in the way the name suggests. They exist in many places. The challenge is that they’re dispersed and chemically similar, which makes separating them incredibly difficult. Turning raw ore into individual elements like neodymium or dysprosium requires complex, multi-stage processes that are capital intensive, environmentally harsh, and technically demanding.

Over time, the U.S. moved away from that part of the business. Environmental concerns played a role. So did economics. Processing is expensive, dirty, and easy to push somewhere else. China leaned into it. Not just mining, but refining, separation, and eventually manufacturing. It treated the entire chain as something worth owning.

That decision compounded. China invested in facilities, trained engineers, absorbed the environmental cost, and built scale. It wasn’t a single breakthrough. It was a series of deliberate choices that made the country indispensable to the global supply chain. There’s a telling moment from 2010, when China restricted rare earth exports during a dispute with Japan. Prices spiked, supply chains scrambled, and industries far removed from mining suddenly felt exposed. What looked like a niche materials market revealed itself as a point of leverage.

Today, the imbalance is striking. The U.S. has restarted mining at Mountain Pass, but much of the material still travels abroad for processing. The capability that determines control, the ability to refine and separate at scale, sits largely elsewhere. The lesson isn’t about who has the resources. It’s about who builds the system around them. And systems like that don’t appear overnight. They are built patiently, often out of sight, with a focus that doesn’t depend on short-term returns.

The uncomfortable part of this story is how quiet it was while it was happening. No single moment where the U.S. “lost.” No headline that captured the shift. Just a steady erosion of capability, masked by the convenience of cheaper inputs and the illusion that someone else would always handle the hard part.

That pattern feels familiar.

Much of the conversation around AI still lives at the surface. Models, benchmarks, incremental gains that are easy to measure and easier to market. It makes for a clean narrative, the kind that fits neatly into headlines and conference stages. But spend time with the people actually building these systems and the conversation changes. The constraints are not abstract. They are physical, stubborn, and in many cases invisible until they fail.

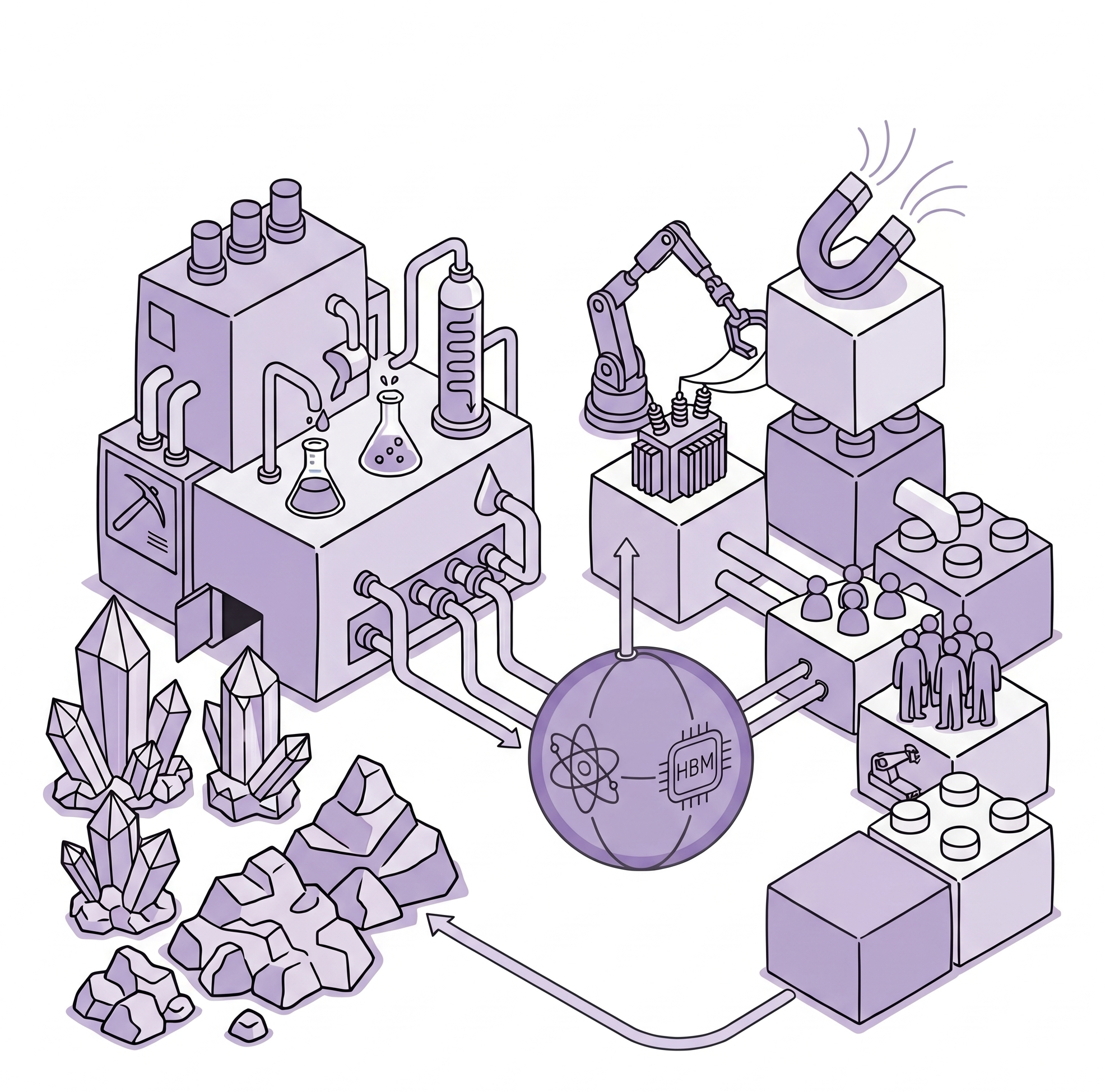

Take high-bandwidth memory. It doesn’t have the cultural weight of GPUs, but it quietly determines what those GPUs can do. Training large models requires moving massive volumes of data, quickly and efficiently, without delay. That depends on memory systems that can keep pace. And those systems are produced by a small number of companies, concentrated in a few places. If supply tightens, progress slows. Not because the ideas have dried up, but because the underlying machinery can’t keep up with the ambition.

Or consider something even less visible. Transformers in the power grid. The hardware that steps voltage up and down so electricity can actually move across regions. They are large, custom-built, and slow to produce. Lead times can stretch into years. Data centers don’t run without them. You don’t scale compute without them either. It’s the kind of component most people never think about, until suddenly it becomes the reason a project is delayed.

The deeper you go, the more the story changes. It becomes less about who has the most advanced model and more about who can actually build and sustain the system that makes those models possible. Compute is only as good as the power behind it. Power is only as good as the infrastructure that delivers it. And that infrastructure depends on timelines that don’t bend easily.

There are signs the U.S. understands this, at least more than before. The scale of investment being discussed around data centers is enormous. Partnerships with companies like OpenAI are tied to commitments that cross half a trillion dollars. If even a portion of that materializes, it will anchor a meaningful share of global AI capacity in the United States.

But scale introduces its own friction. All that compute needs energy, and a lot of it. The grid wasn’t built for this kind of demand. Expanding it takes time. Permitting alone can stretch for years. Projects get delayed, contested, slowed down by processes that were never designed for this pace of change. And that opens up a different set of questions. Not about what’s possible in theory, but about what can actually be built in practice, on time, at scale.

You start to see the pattern. The further downstream you go, the less this looks like a race over models and the more it looks like a race over systems. Who can move across the entire chain without getting stuck. Who can build not just the visible layer, but everything underneath it.

The rare earth story is useful because it strips away the abstraction. The U.S. didn’t lose because it lacked resources. It lost because it let the harder, less visible parts of the system move elsewhere. The expertise, the processing, the operational backbone. Once that foundation shifted, everything built on top of it became more fragile.

There is still time to get this right in AI. The investments are real. The urgency is there. But the lesson is not about moving faster at the surface. It’s about committing to the parts that take longer, cost more, and rarely get attention until they’re missing.

Rare earths were never about scarcity. And neither is this. In government, the constraint isn’t whether the technology works, it’s whether it can survive the layer where things actually break. Integration across fragmented systems. Data that can’t move cleanly or safely. Procurement processes that stretch timelines and dilute intent. These aren’t edge cases, they are the environment. Most vendors stay at the surface because that layer is slow, opaque, and hard to monetize. But it’s also where adoption is decided. The lesson isn’t to build faster models. It’s to build for the system as it exists, and to own the parts that others avoid.